The Platform (Russell, A Circumplex Model of Affect, 1980; Mehrabian & Russell, 1974)

Science that reads the face, not the mouth.

EchoDepth Insight is built on the same FACS and VAD foundation as the clinical science of emotion — adapted for commercial research and delivered entirely remotely.

The methods it replaces — focus groups, moderated interviews, culture surveys, post-session recall — measure what people are willing to say. EchoDepth measures the involuntary signal that precedes any verbal response. It is facial coding software, emotional analytics and biometric research software in one platform — the HR technology layer that reaches beneath survey data — compatible with and complementary to existing qualitative research methodology, delivering actionable insight rather than raw data.

The Science

44 Action Units. The universal language of feeling.

The Facial Action Coding System (FACS) was developed by Paul Ekman and Wallace Friesen — the same research foundation that informed decades of emotion science and clinical psychology. It maps every visible human facial movement to one of 44 Action Units (AUs).

EchoDepth Insight tracks all 44 AUs per video frame, per participant. Because the majority of AU activations are involuntary — they cannot be consciously suppressed or manufactured — they give a signal that is independent of what the participant says they felt, or chooses to disclose.

This is the foundation of what makes EchoDepth different from every survey, rating scale or moderated discussion. Those methods measure self-report. EchoDepth measures the body.

- AU6 + AU12 — Genuine enjoyment (Duchenne smile vs social smile)

- AU1 + AU4 — Concern, worry, cognitive engagement

- AU5 + AU7 — Attention intensity and surprise

- AU17 + AU24 — Doubt, suppression, withheld response

- All 44 AUs tracked simultaneously at up to 30fps

Live AU activation — packaging stimulus

Strong genuine positive response to packaging design. AU6/12 co-activation confirms authenticity — social smile would show AU12 alone.

Output Model

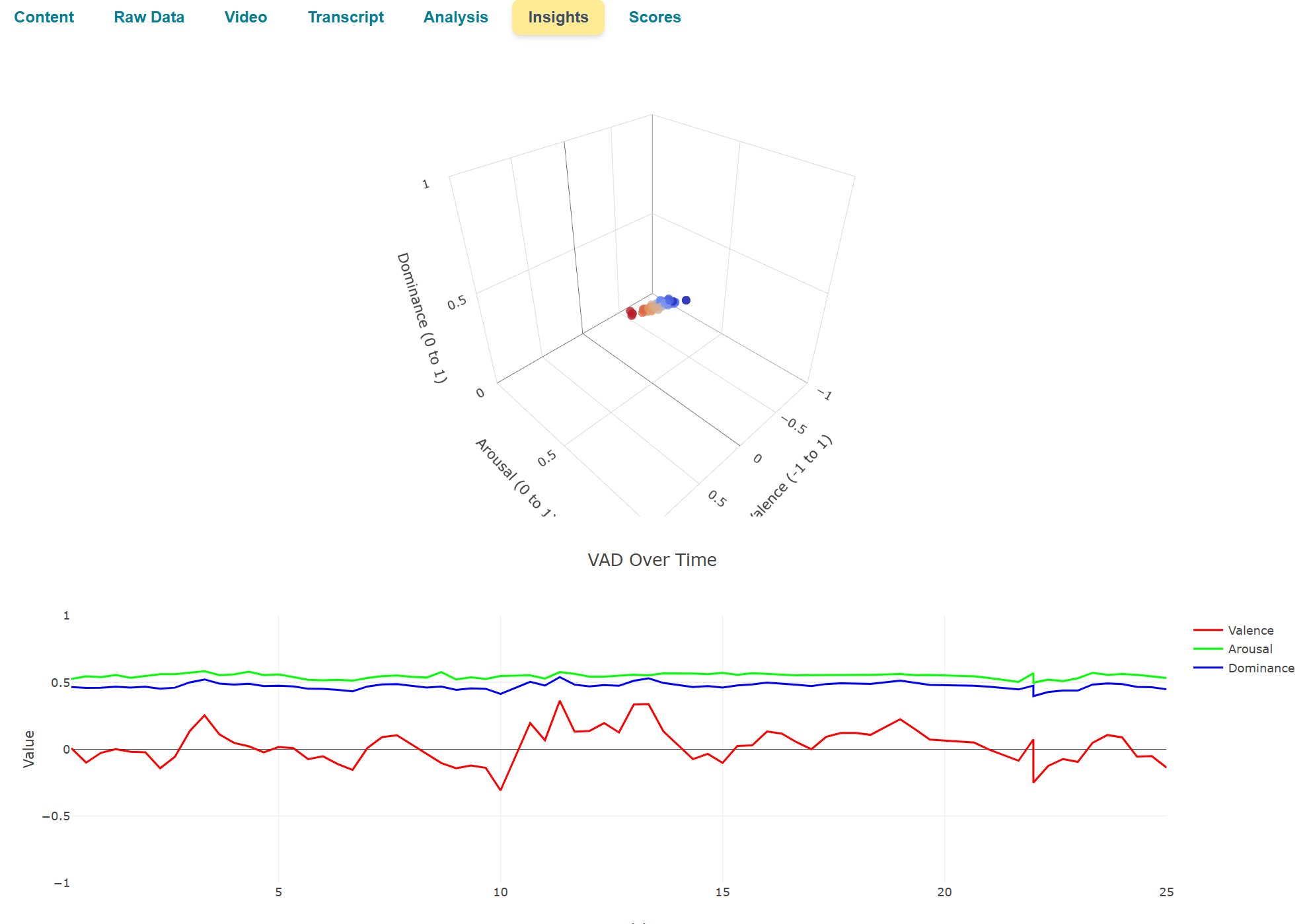

Three numbers that describe every emotional state.

EchoDepth outputs VAD scores continuously throughout a session — giving researchers a multidimensional emotional profile at every moment of stimulus exposure.

Valence

VPositive-to-negative emotional polarity. High valence = positive, comfortable, pleased. Low valence = negative, discomfort, displeasure.

In research: Valence drops at specific moments reveal where concepts, copy or design elements trigger negative response — without the participant being able to articulate why.

Arousal

ACalm-to-excited activation level. High arousal indicates heightened engagement — which may be positive excitement or negative stress.

In research: Arousal combined with valence reveals whether engagement is positive (excited, interested) or negative (anxious, alarmed). Arousal without valence context is meaningless — EchoDepth gives you both.

Dominance

DSubmissive-to-in-control sense of agency. High dominance = confidence, control. Low dominance = vulnerability, powerlessness.

In research: Dominance collapse during a product or messaging stimulus reveals confusion, overwhelm or perceived complexity — actionable for UX, packaging and communication design.

Remote Delivery

Global reach. Zero facility cost.

Traditional research facilities are expensive, logistically complex, and geographically limited. The participants you can afford to recruit are rarely the participants your research actually needs.

EchoDepth Insight sessions are delivered entirely remotely via any device with a standard camera. Participants join from anywhere in the world. There is no travel, no facility hire, no logistical overhead — and no difference in the quality of emotional signal.

- Any device with a standard webcam or front-facing camera

- Browser-based — no app installation required

- Global participant access — recruit from any geography

- Synchronous (live interview) and asynchronous (self-completion) modes

- Multi-participant sessions for comparative analysis

- Integrated consent capture and participant management

Session types supported

Live 1:1 or 1:few session with a researcher or AI-moderated protocol. Emotional response captured throughout the conversation.

Participant views concepts, packaging, advertising, video or written material. Moment-to-moment emotion curve generated per stimulus.

Multiple concepts exposed in sequence. Emotional comparison across concepts with carryover effect analysis.

Same participants tracked over time — before, during and after campaign exposure or product launch. Emotional response change tracked longitudinally.

Privacy & Governance

Consented. Time-bound. No data retained.

No raw video stored

Video is processed in memory. No frames are ever written to disk. Only VAD scores and AU activations are output and stored.

Explicit consent

Every participant consents digitally before the session begins. Consent is specific to the research purpose — not blanket agreement.

GDPR by design

Designed for UK and EU regulatory environments. Data residency options available for pharma and healthcare clients with specific requirements.

On-premise option

For organisations where data cannot leave the premises. Full platform capability with on-premise deployment — critical for pharma and defence clients.

Common Questions

Understanding the science behind EchoDepth.

What is FACS and why does it matter for research?

FACS (Facial Action Coding System) is the scientific gold standard for facial expression analysis. It maps every visible face movement to one of 44 numbered Action Units. Because AUs are largely involuntary — many cannot be consciously faked — they provide a reliable measure of genuine emotional response, independent of what the participant says or believes they felt.

What is VAD scoring in the context of research?

VAD stands for Valence (positive to negative), Arousal (calm to excited) and Dominance (submissive to in-control). EchoDepth Insight outputs continuous VAD scores throughout a research session, providing a three-dimensional emotional profile at every moment of stimulus exposure — not a single rating at the end.

How does a remote EchoDepth session work?

Participants join via any device with a standard webcam or front-facing camera — no app required. Consent is captured digitally. Stimuli are delivered in-session. EchoDepth captures 44 facial Action Units continuously, computes VAD scores in real time, and flags key emotional moments automatically. A structured report is generated with emotion timelines, group aggregates and segment comparisons.

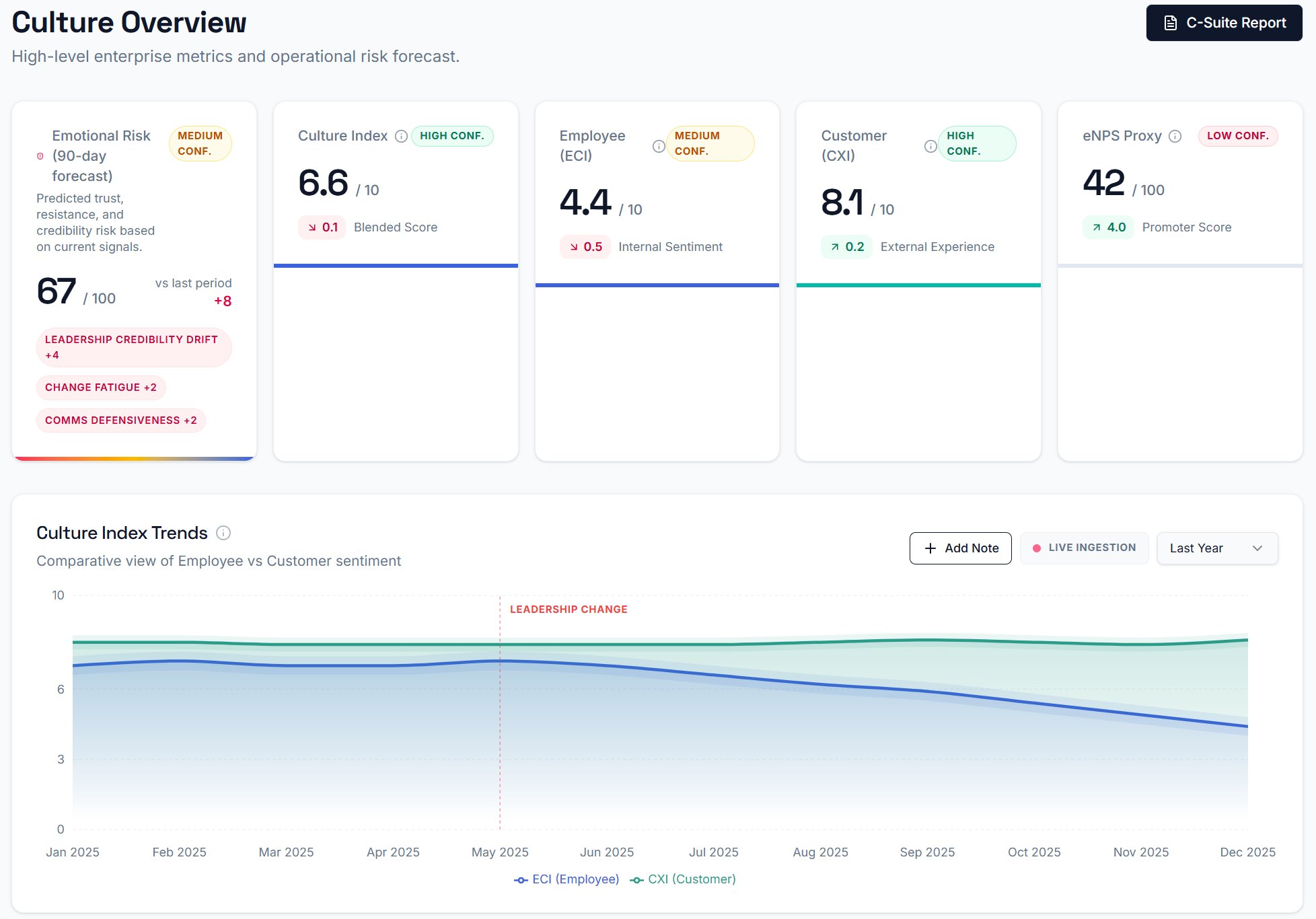

Platform output

Five views. One intelligence system.

EchoDepth produces five structured views per engagement. Every view is exportable as a board-ready PDF.

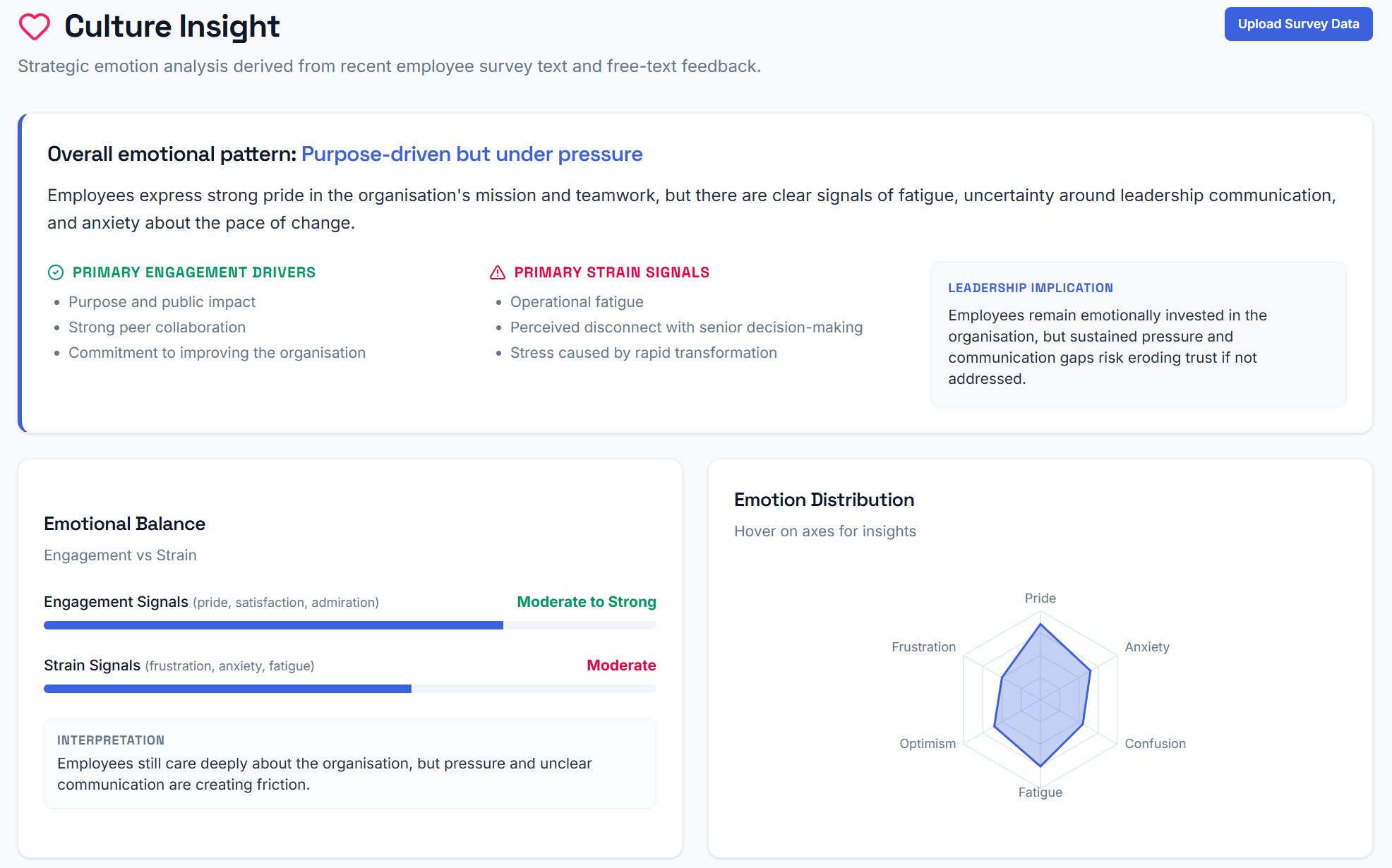

Culture Insight — emotional pattern and leadership implication

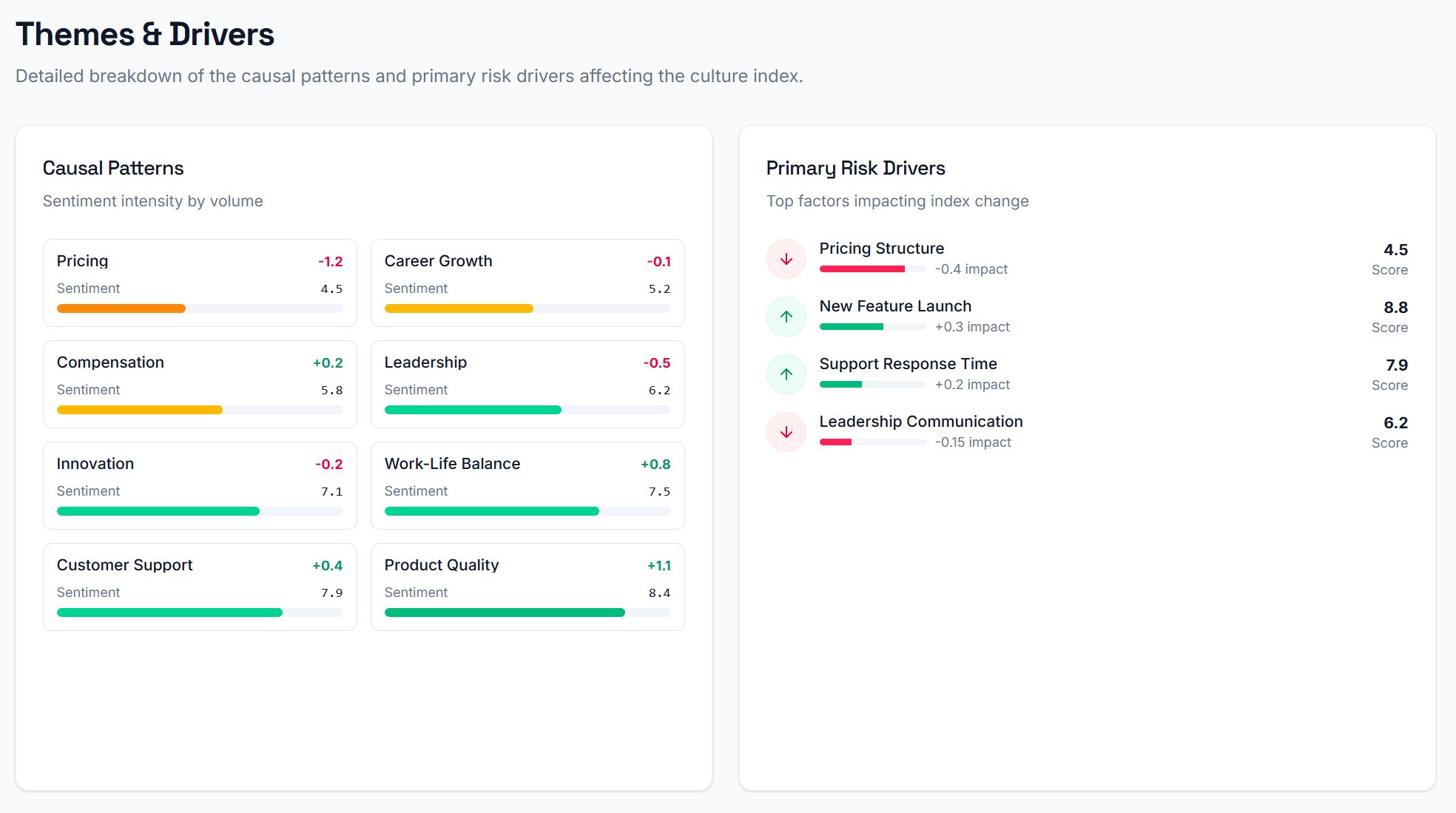

Themes & Drivers — causal patterns and risk driver ranking

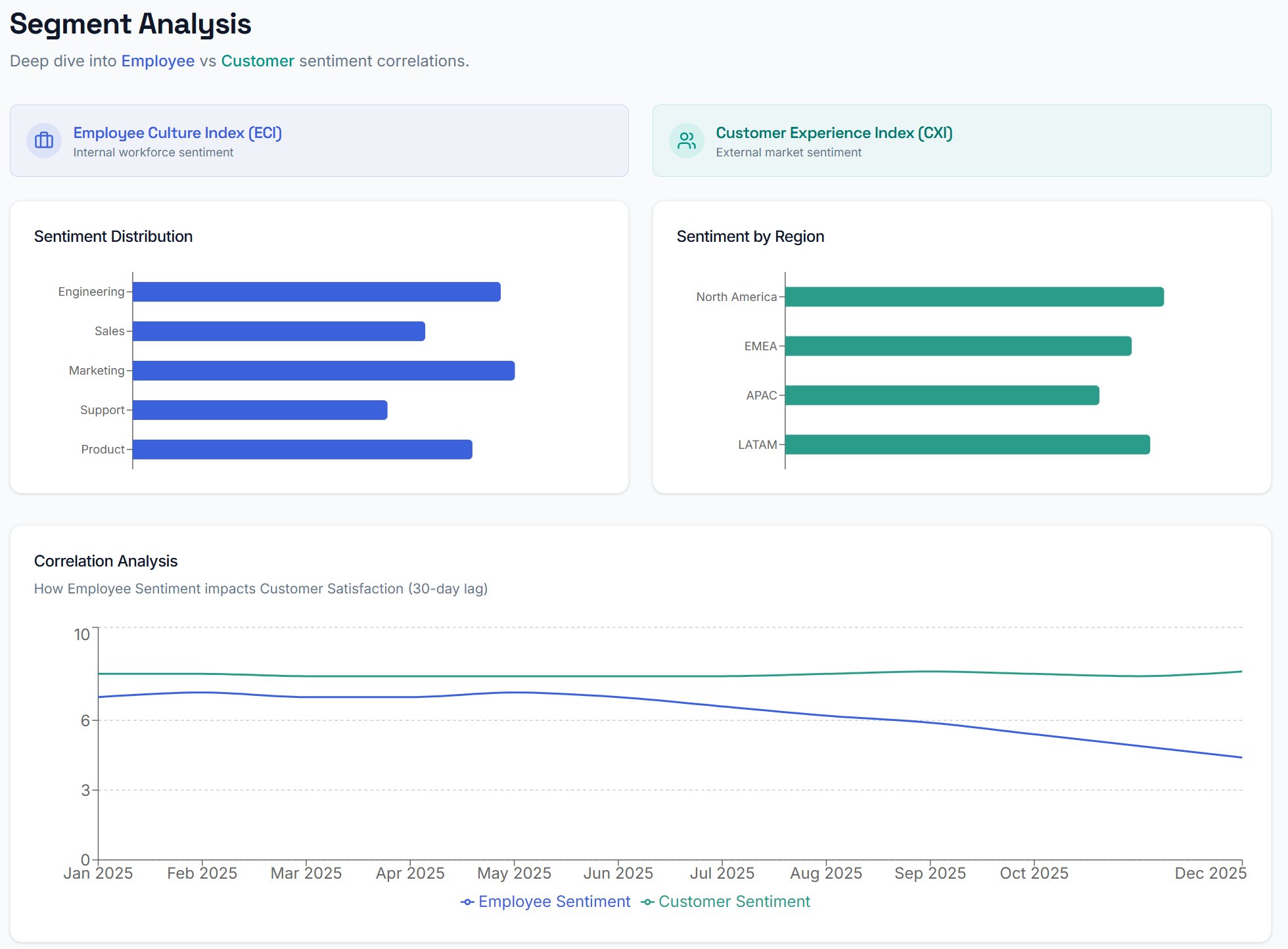

Segment Analysis — ECI vs CXI by team and region

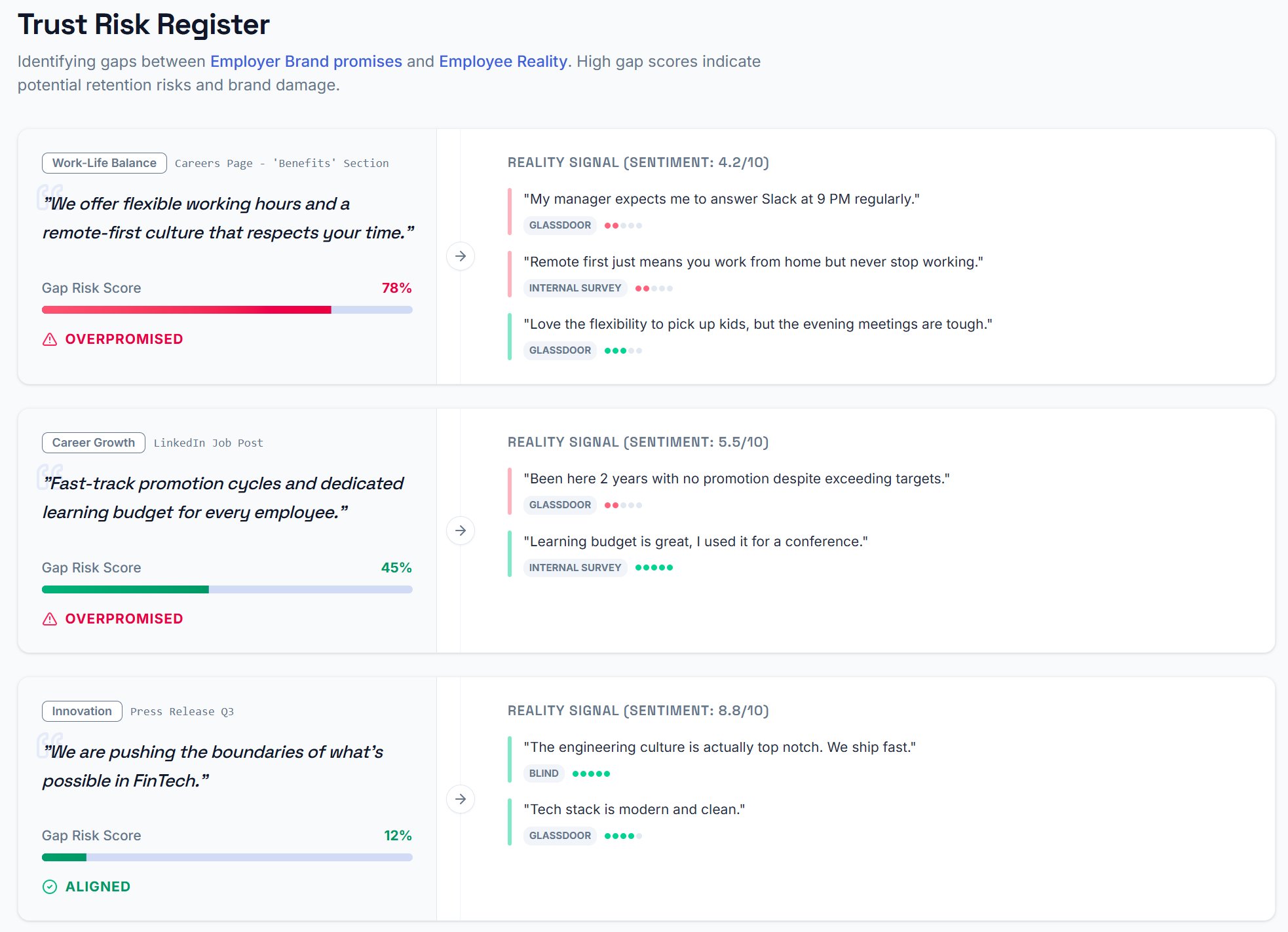

Trust Risk Register — brand promise vs employee reality

See what emotional capture looks like in your research.

Whether you're in pharma, FMCG or healthcare — we can show you what EchoDepth reveals that traditional methods miss.