What is FACS analysis — and why does it matter for research?

The Facial Action Coding System is the scientific standard for measuring human emotion from facial behaviour. Here is how it works, what it measures, and why it changes what research is possible.

Published April 2026 · Part of the EchoDepth Insights series · By Jonathan Prescott · Cavefish · 8 April 2026

The problem that FACS solves

Every research method that relies on what people say faces the same fundamental constraint: people cannot accurately report what they feel. This is not dishonesty — it is neuroscience. The emotional response to a stimulus occurs in milliseconds, in subcortical brain regions that have no direct language connection. By the time a participant forms a verbal or written response, they have already filtered, interpreted, and socially moderated what they experienced.

This is the articulation gap. And it sits at the heart of why focus groups, surveys, and post-session interviews consistently produce data that diverges from actual behaviour. The Facial Action Coding System exists to measure what happens before the filter engages.

What FACS actually is

FACS was developed by Paul Ekman and Wallace Friesen, first published in 1978 after a decade of cross-cultural research across six countries and 14 cultural cohorts. It is a taxonomy — a systematic coding scheme — that describes every possible movement of the human face in terms of the underlying muscle groups that produce it.

Each movement is assigned an Action Unit number. AU1, for example, is the inner brow raiser — the medial frontalis muscle. AU4 is the brow lowerer — the corrugator supercilii and depressor supercilii. AU12 is the lip corner puller — the zygomaticus major.

There are 44 Action Units in total covering all major facial muscle groups. No single AU tells you much. The power of FACS lies in combinations. AU6 (cheek raiser) combined with AU12 (lip corner puller) produces the Duchenne smile — the involuntary marker of genuine enjoyment, distinct from a social smile that only activates AU12. AU4 paired with AU7 (upper lid tightener) and AU17 (chin raiser) indicates suppressed distress. These combinations cannot be consciously produced or suppressed at the same rate as voluntary facial expressions.

Why involuntary muscle activation matters

The key distinction in FACS analysis is between voluntary and involuntary facial muscle movements. When you consciously smile at someone you do not like, you activate the zygomaticus major (AU12). But without genuine positive affect, you will not simultaneously activate the orbicularis oculi (AU6) that raises the cheeks and creates the characteristic crow's feet of a real smile. Ekman's research across cultures confirmed that this distinction — the Duchenne marker — is universal and cannot be reliably faked.

This involuntary quality is what makes FACS analysis resistant to social desirability bias. Participants in a research session can say they liked a product concept. They can write that a drug communication made them feel reassured. They cannot simultaneously suppress the AU combination that FACS identifies as disgust or confusion.

From Action Units to VAD scores

Raw FACS analysis — manually coding Action Units — is extraordinarily time-consuming. A trained human FACS coder can analyse approximately one minute of video per hour of work. This made large-scale application impractical until computer vision and machine learning made automated AU detection viable.

EchoDepth processes FACS analysis at up to 30 frames per second using any standard webcam — mapping AU activations in real time to the VAD (Valence-Arousal-Dominance) emotional model. Valence captures the positive-to-negative dimension (0.0 to 1.0). Arousal captures calm-to-activated (0.0 to 1.0). Dominance captures feeling-controlled-to-in-control (0.0 to 1.0).

A VAD score of V:0.72 / A:0.45 / D:0.68 describes a moderately positive, moderately activated, high-agency emotional state — genuine interest with a sense of control. A score of V:0.19 / A:0.78 / D:0.22 describes a low-valence, high-arousal, low-dominance state — the signature of acute distress or fear. These are not interpretations. They are numerical outputs from measured facial muscle activations.

What FACS analysis enables in research

The practical consequence of FACS-based emotional measurement is that research can now capture what was previously uncapturable: the genuine, pre-verbal, involuntary response to a stimulus at the moment it occurs.

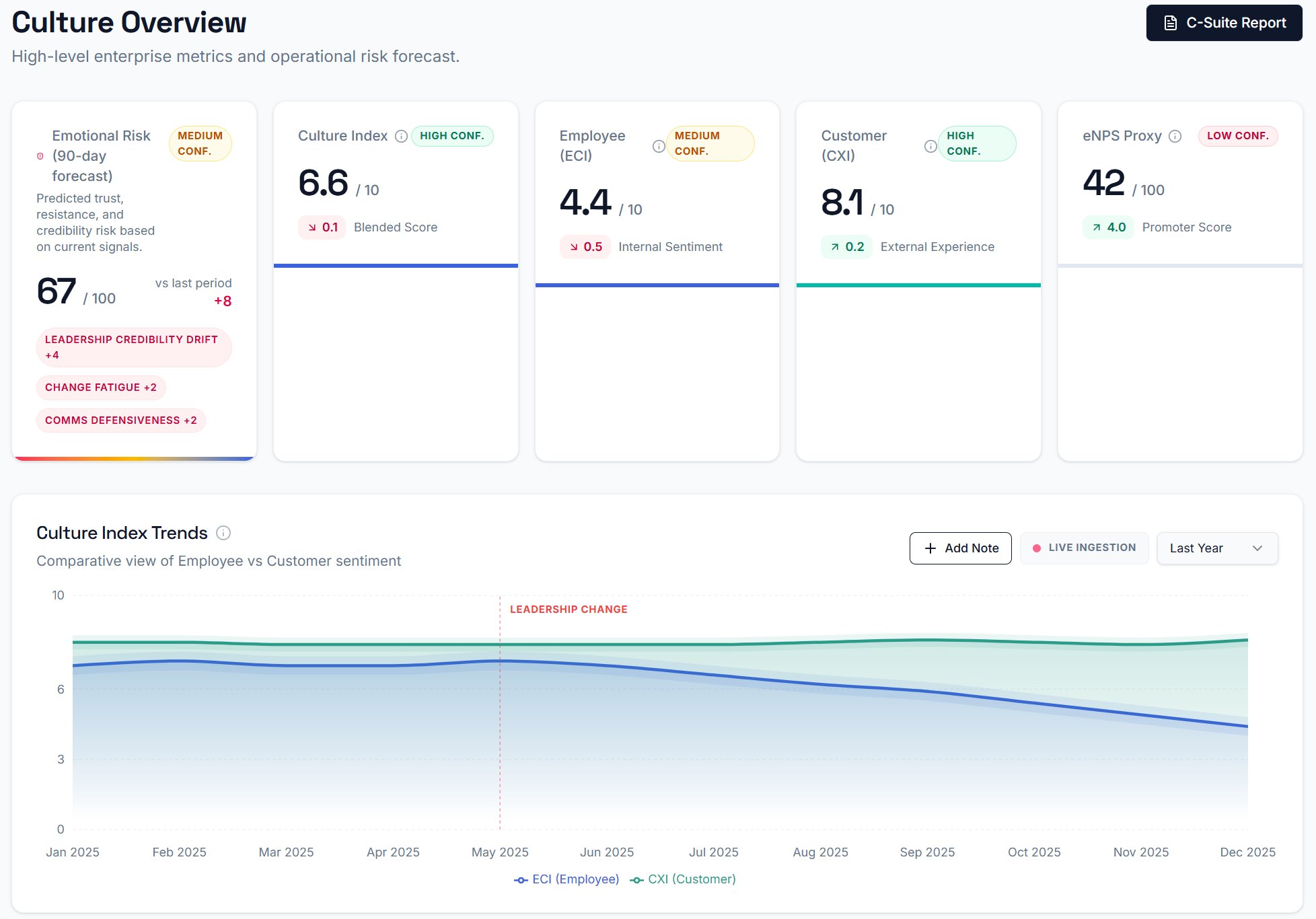

In pharma research, this means detecting when a patient communication triggers fear — not after the session when they report feeling reassured, but at the specific second during the video when the side effect voiceover plays. In FMCG product testing, it means separating the "interesting but wouldn't buy" response (high arousal, moderate valence, low dominance) from genuine desire (high valence, high dominance). In people and culture measurement, it means surfacing the Disapproval and Contempt signals underlying a diplomatically worded survey response — what EchoDepth calls text-emotion divergence.

FACS analysis does not replace qualitative research. It adds a layer of measurement to it that was not previously available outside a university neuroscience laboratory. The articulation gap still exists — FACS simply makes it visible, measurable, and actionable.

The training data question

One legitimate challenge in FACS-based emotional AI is cultural calibration. Ekman's original research argued for universal basic emotions expressed consistently across cultures. Subsequent research has identified meaningful cultural variation in emotional display rules — the social norms that govern when and how emotions are expressed publicly. A research participant from a culture with strong emotional suppression norms will produce different baseline AU patterns than one from a more expressively permissive culture.

EchoDepth's training data spans 14 cultural cohorts across six countries, with culturally calibrated baseline models. This does not make cultural bias disappear — no emotional AI system can claim that — but it makes the model's assumptions transparent and the output more reliable across diverse research populations than a single-culture training set would produce.

Key takeaway

FACS analysis measures the involuntary facial muscle activations that precede and accompany emotional experience — before any social moderation, verbal processing, or conscious reporting occurs. Combined with VAD scoring, it produces numerical, comparable emotional output from what was previously an uncapturable signal. That is what makes it the foundation of serious emotional AI research.

Continue reading:

Frequently asked questions

What does FACS stand for?

Facial Action Coding System — the scientific taxonomy of facial muscle movements developed by Ekman and Friesen.

How many Action Units does EchoDepth track?

44 Action Units per frame, covering all major facial muscle groups.

Does the participant need special hardware?

No. Any standard webcam at 720p or above is sufficient. No wearables, sensors, or specialist equipment required.