Research methodology

Why focus groups fail to predict market success — and what to do instead.

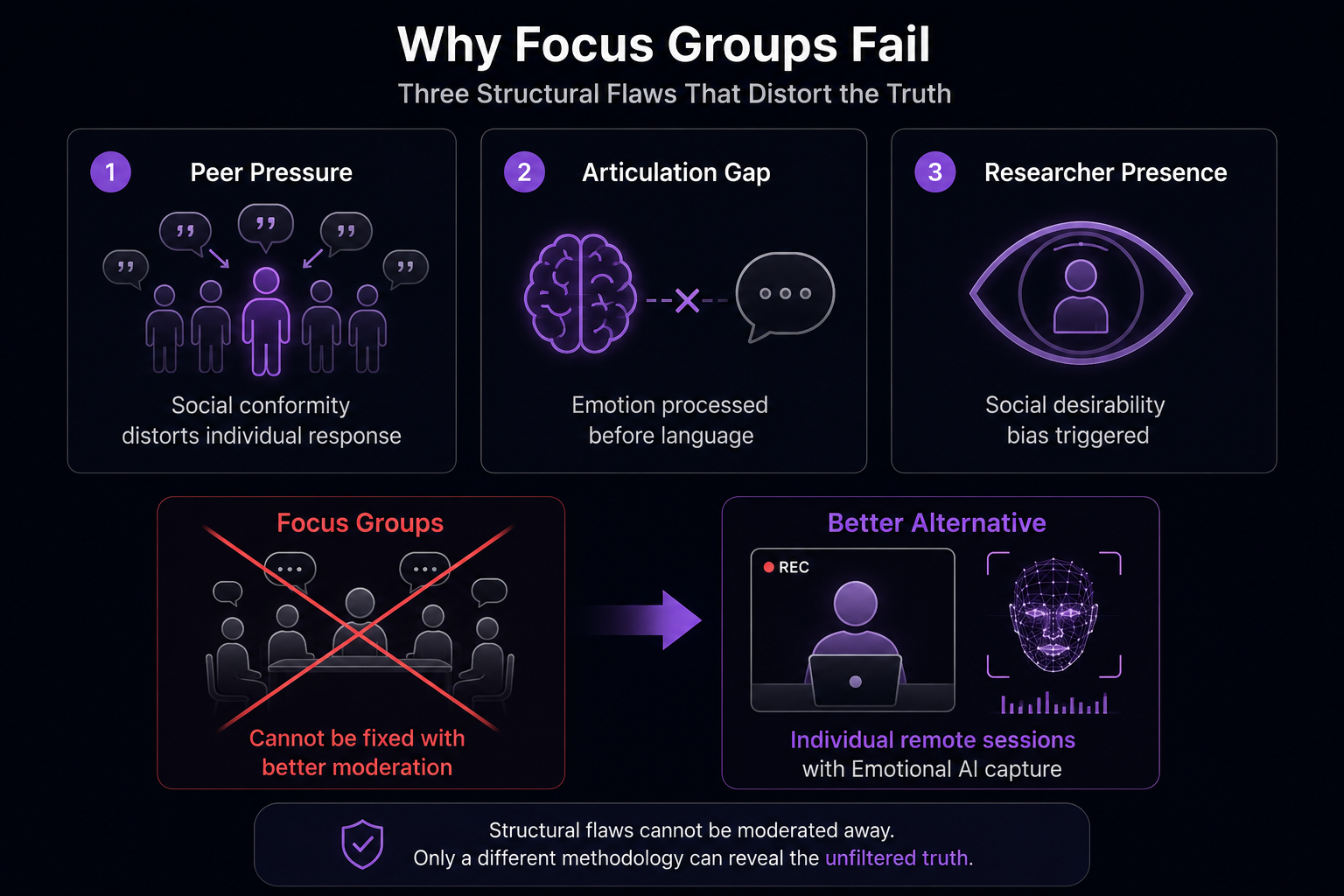

Focus groups have three structural problems that cannot be fixed with better facilitation, more skilled moderators, or more comfortable venues. The problems are inherent to the method — and they explain why focus group approval consistently diverges from market performance.

Published April 2026 · By Jonathan Prescott, Cavefish · Part of the EchoDepth Insights series

The record: Approximately 80–90% of new consumer product launches fail within 12 months of launch (Nielsen). The majority are preceded by research — including focus groups — that predicted success. The failure does not happen at market. It happens in the research room, where the methodology cannot distinguish genuine purchase intent from socially acceptable approval.

The three problems no facilitator can fix

Problem 1: Peer pressure and group conformity

The group setting is the first and most obvious problem. Participants hear each other's responses. They adjust accordingly. When one articulate, confident participant says "I think it's quite interesting" with mild enthusiasm, the next three participants produce variations of the same sentiment — even if they felt something quite different before the first person spoke.

This is not dishonesty. It is normal social cognition. Human beings are wired to calibrate their expressed opinions against the group they are in — it is a survival mechanism, not a research artefact. The focus group room creates exactly the conditions that trigger this behaviour: strangers, observation, a facilitator with implicit authority, and the knowledge that responses are being recorded. All of these factors push participants toward the socially safest available response.

The socially safest response in a product research context is almost always some variant of "interesting." Interesting is non-committal. Interesting cannot be wrong. Interesting does not embarrass anyone. And interesting, multiplied across eight participants in a room, produces a research report that cannot predict which products will sell and which will not.

Problem 2: The articulation gap

Emotional experience is processed before language. When a participant sees a product concept for the first time, their felt response occurs in the 200–500 millisecond window immediately following exposure — before any verbal processing has begun. By the time the facilitator asks "what did you think of that?", the participant is no longer describing a feeling. They are constructing a verbal account of a feeling they have already partially forgotten.

This is the articulation gap: the difference between what someone felt and what they can accurately describe in words. The gap exists in all self-report research, but focus groups make it worse. Participants must describe complex, often ambiguous emotional responses on the spot, in public, to a stranger, in real time. The result is a compressed, socially moderated version of the actual experience — which was richer, faster, and more ambivalent than any verbal account can capture.

The articulation gap explains why focus group responses often lack the nuance that shows up later in usage data. Participants did feel something. They simply could not describe it accurately — and they did not know they could not.

Problem 3: Social desirability bias

Social desirability bias is the tendency to give responses that will be positively received by others, rather than responses that accurately describe one's experience. In focus groups, it operates at two levels simultaneously.

At the peer level, participants adjust toward what they perceive the group to approve of. At the authority level, they adjust toward what they perceive the facilitator or brand wants to hear. Even when facilitators are scrupulously neutral, the research context itself — being recruited by a brand to evaluate their product — creates an implicit pressure to be positive.

This is not a moderation problem. Better trained facilitators can reduce the authority-level effect. They cannot remove it. And they cannot remove the peer-level effect at all, because it operates between participants rather than through the facilitator.

The consequence: research that cannot predict behaviour

The combined effect of these three problems is a systematic bias toward positive, undifferentiated responses. Focus groups reliably produce "interesting" and "I like it" — and reliably fail to produce the signal that matters commercially: genuine purchase desire.

The gap between "interesting" and "I would buy this" is the gap between a failed launch and a successful one. A research method that cannot distinguish these two states cannot predict market performance. That is not a reflection of poor execution — it is the structural limitation of asking people to describe their emotional response rather than measuring it.

The EchoDepth approach

Measure the response. Don't ask for it.

EchoDepth captures the involuntary facial muscle response that occurs in the 200–500ms window following stimulus exposure — before peer pressure has operated, before articulation has compressed the experience, and before social desirability has filtered the response. The measurement is individual, remote, and physiological. It cannot be moderated by the participant.

What you can legitimately use focus groups for

Focus groups are not worthless — they are misapplied. They are good at specific things that other methods do not do well:

- Language exploration: finding the words and phrases that resonate with a target audience, which can then inform communications and copy.

- Hypothesis generation: identifying issues and themes worth investigating further with more reliable methodology.

- Concept refinement: understanding which elements of a concept are confusing or unclear — not whether the concept is genuinely desired.

- Qualitative depth: exploring the context, history and associations that participants bring to a topic, which quantitative tools cannot surface.

The problem is not using focus groups for these purposes. The problem is using focus group approval as a prediction of market performance — which it structurally cannot provide.

What to use instead

The most powerful replacement for focus group-led concept research combines individual emotional response capture with one-to-one qualitative depth — removing both the social distortion and the articulation gap:

- Individual emotional AI capture: each participant views the concept alone, without peer influence. Their involuntary facial response is scored in real time — producing genuine emotional signal before any verbal processing occurs.

- One-to-one depth interview: after emotional capture, a brief structured interview explores context, associations and language — without the group conformity effect.

- Cross-participant VAD comparison: the emotional data from all participants is scored and compared, identifying concepts with genuine high-valence response vs concepts that produced socially acceptable "interesting."

This combination is faster than a focus group programme, cheaper per research question answered, and produces findings that correlate with market behaviour rather than diverging from it.

Client perspective

"Using EchoDepth from Cavefish allows us to validate ideas quickly, minimising the risk of launching a product or idea."

Gethin Thomas

CEO, Iterate

Common questions about focus group reliability

Why do focus groups give wrong results?

Related

How to validate a product concept before launch

The four-step process for capturing genuine purchase desire before launch — individual, remote, emotionally accurate.

Related

Social desirability bias in pharma research

The same distortions that affect focus groups are even more acute in pharma patient and HCP research — and the consequences are higher.

Platform

What is emotional AI?

The science behind measuring genuine emotional response — without asking anyone to describe how they feel.

EchoDepth Insight

Run your next concept test without a focus group.

Individual, remote, emotionally accurate. Book a 30-minute call — we will show you what EchoDepth would surface on your specific research challenge.