Concept testing & NPD

How to validate a product concept before you launch — without trusting the focus group.

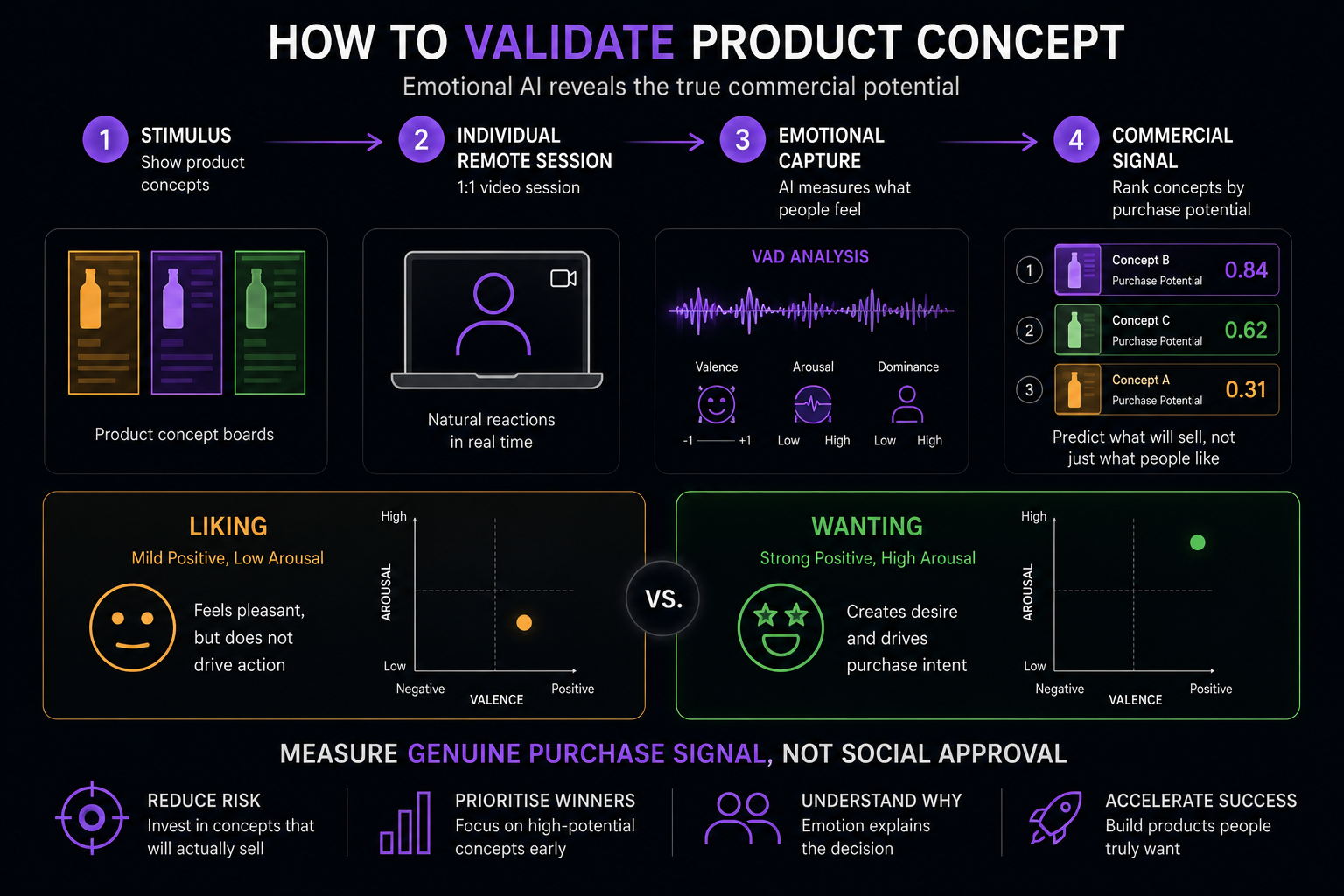

Traditional concept testing produces approval. It cannot produce purchase signal. The two are not the same — and the gap between them is where most new product launches fail. Here is how emotional AI closes that gap, and how to get a genuine answer in days rather than weeks.

Published April 2026 · By Jonathan Prescott, Cavefish · Part of the EchoDepth Insights series

The context: Approximately 80–90% of new consumer product launches fail within 12 months, despite positive preceding research (Nielsen, Cambridge Innovation Advisors). The majority of these failures were preceded by concept test scores that predicted success. Something is systematically wrong with how we validate ideas before committing to launch.

The problem: concept testing measures liking, not wanting

Standard concept testing — whether run as a focus group, an online survey, or a monadic test — asks participants to evaluate a concept and indicate how much they like it, how interested they are, or how likely they are to purchase. The responses look like purchase signal. They are not.

What concept testing actually measures is a combination of social approval, intellectual interest, and hypothetical purchase intent. All three of these are different from genuine desire. Genuine desire is a physiological state: elevated arousal, strong positive valence, a sense of agency and wanting. You cannot reliably access it by asking someone to predict what they would do.

Research consistently shows that purchase intention scores from concept tests are poor predictors of actual purchase behaviour. The correlation between "top-2-box purchase intent" and in-market conversion is low enough that experienced researchers treat concept scores as directional indicators at best. The reason is not that the researchers are doing something wrong. It is that the methodology cannot measure what it needs to measure.

The "interesting but wouldn't buy" problem

There is a specific failure mode that FMCG, innovation and NPD teams encounter repeatedly. A concept goes through research. It scores well — participants describe it as interesting, different, something they'd try. It passes the internal gate. It launches. It fails.

In VAD terms, "interesting but wouldn't buy" has a recognisable signature. Moderate positive valence: the concept is received positively. Low to moderate arousal: there is no urgency, no pull, no wanting. Low dominance: the participant does not feel a strong sense of agency toward the concept. This emotional pattern — mild positive interest without genuine desire — produces exactly the kind of positive concept test scores that lead to failed launches.

Genuine purchase desire has a different signature. High positive valence. High arousal — the response is immediate, strong, and physiologically activated. High dominance — the participant feels drawn toward the concept rather than passively interested in it. This is what EchoDepth captures from the involuntary facial response in the 200–500ms window after first exposure to a concept.

How to validate a concept with emotional AI

The process is straightforward. It does not require new technology infrastructure, specialist facilities, or an extended research programme. It works alongside your existing concept testing methodology, or as a standalone validation step.

Define the research question

Agree the specific decision this validation needs to support — which concept to progress, which target segment to prioritise, or which of several executions has the strongest emotional pull. The tighter the question, the faster and cheaper the research.

Prepare stimulus materials

Concept boards, packaging designs, product descriptions, advertising storyboards, or rendered product visuals. EchoDepth evaluates any stimulus presented on screen — no physical prototypes needed.

Capture individual emotional response

Each participant views the concepts individually, via a browser link on their own device. No facility, no group, no facilitator. EchoDepth scores 44 facial Action Units per frame in real time — capturing the genuine emotional response at first exposure, before social moderation or verbal rationalisation has occurred. 20–30 participants per segment is typically sufficient for directional ranking.

Receive structured concept ranking

Within five working days: VAD scores per concept, emotional ranking across the set, identification of which concept produced genuine desire vs which produced "interesting," and specific recommendations about which to progress and why — data to information to knowledge the team can act on.

What you get that traditional concept testing cannot provide

Genuine first-exposure signal

The emotional response captured in the 200–500ms after concept exposure — before any verbal processing, social adjustment, or rationalisation has occurred. This is the closest thing to a prediction of genuine market desire available from any research method.

Concept ranking by real desire

Which concept produced high-valence, high-arousal response — genuine wanting — vs which produced moderate positive interest. This distinction is invisible to any survey-based concept test and is the primary commercial differentiator.

Speed

A complete concept validation study — design, recruitment, sessions, analysis, report — in 10–15 working days. A proof-of-concept on existing stimulus materials in 5 working days. No facility booking, no moderator scheduling, no travel.

Actionable output

Not raw data to interpret — a structured report with specific, prioritised recommendations. Which concept to progress, which to refine, which to discontinue. Data to information to knowledge the team can act on before the next gate.

Client perspective

"Using EchoDepth from Cavefish allows us to validate ideas quickly, minimising the risk of launching a product or idea."

Gethin Thomas

CEO, Iterate

Common questions

How do you validate a product concept quickly?

Related

FMCG consumer insights software

EchoDepth for FMCG concept testing, packaging research and advertising pre-testing — capturing genuine purchase signal.

Related

Why focus groups fail to predict market success

The three structural problems that make focus groups unreliable predictors — and what to use instead.

Platform

Pricing & engagement models

Three ways to work with EchoDepth — from a scoped proof of concept from £3,500 to annual subscription and consultancy.

EchoDepth Insight

Validate your next concept before you commit to launch.

Send us the concept stimulus. We will show you which has genuine desire behind it — and which is just interesting — within five working days.