Defence training & human performance

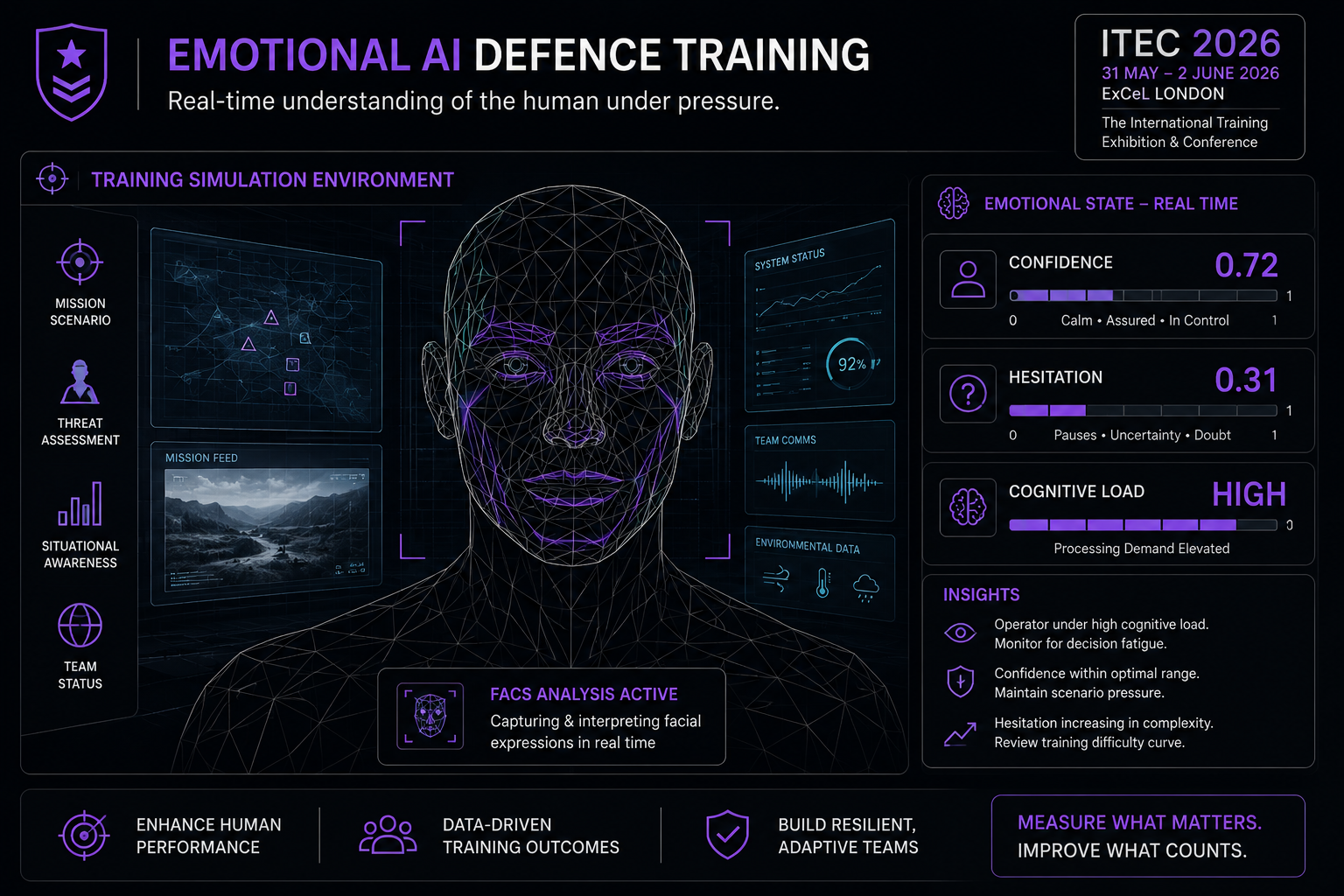

Emotional AI in defence training: what presenting at ITEC 2026 taught me.

I presented to Europe's largest defence training conference and told them they'd been measuring understanding wrong. Here's what happened — and what the audience response revealed about where this technology is actually heading.

Published April 2026 · By Jonathan Prescott, Cavefish · Reflections from ITEC 2026, ExCeL London

Note for JP: This article is pre-drafted. After the talk on 15 April, add 2–3 sentences of real detail to each [PLACEHOLDER] section below — a specific question from the audience, a reaction that surprised you, a moment that landed differently than expected. Everything else is ready to publish. Remove this note box before publishing.

The room

ITEC 2026 is Europe's largest dedicated event for defence training and simulation technology. The Human Performance Theatre at ExCeL London holds [PLACEHOLDER: approximate number] people. The audience on 15 April was a mix of [PLACEHOLDER: brief description — e.g. "military training officers, simulation specialists, and procurement leads from across NATO member states"].

I opened with a question I suspect most of them had never been asked about their own discipline: what if the way you've been measuring whether training has worked is structurally incapable of telling you the truth?

[PLACEHOLDER: one sentence about the room's initial reaction — silence, scepticism, recognition?]

The argument that landed

The session title was "Feel to know: Using emotion to measure real understanding." The core argument: understanding arrives emotionally before it arrives verbally. The moment a concept clicks happens in the 200–500 millisecond window before anyone can write it down, tick an assessment box, or tell their trainer they've got it.

Every test, every assessment, every after-action review measures the rationalisation of that moment — not the moment itself. What a learner can demonstrate in controlled conditions, after processing, after the emotional experience of learning has already occurred and partially faded.

[PLACEHOLDER: one specific point from the talk that got the strongest response — a statistic, a demo moment, a question you asked the audience?]

"Emotion signals genuine understanding more accurately than any survey or test. AI can now measure that signal — in real time, individually, without disrupting the training environment."

What the audience pushed back on

The most searching questions from any defence audience are about ethics. Rightly so. Measuring the involuntary physiological response of military personnel — even in training — raises questions that deserve straight answers.

[PLACEHOLDER: the specific question or concern raised from the floor. Was it about data retention? Individual profiling? Consent in hierarchical environments? Use in performance management?]

The answer I gave: the ethical framework for emotional AI in training is not fundamentally different from the ethical framework for any biometric data use. Transparency, purpose limitation, data minimisation, and appropriate governance. What matters is the governance structure around the measurement, not the measurement itself. EchoDepth operates under UK GDPR with ICO registration, and we provide a governance framework as standard for every deployment.

The more interesting follow-up question was [PLACEHOLDER: the second question that surprised you or that you found genuinely interesting].

What this sector is actually ready for

Defence training has spent thirty years refining simulation fidelity — making the environment as close to real as possible. The implicit assumption is that if the environment is right, learning will follow. Emotional AI adds a different kind of fidelity: not environmental, but cognitive. Not simulating the experience, but measuring whether it is being genuinely processed.

[PLACEHOLDER: one specific application or use case that came up in conversation — either in the Q&A, or in the corridor after the session. What did someone say they'd actually use this for?]

The most striking thing about presenting this to a defence audience is that they are not sceptical about the technology — they are sceptical about the governance. That is, frankly, the right thing to be sceptical about. And it is a solvable problem.

What it means for organisations outside defence

The ITEC session focused on training and human performance. But the underlying argument — that understanding arrives emotionally before it arrives verbally, and that existing measurement methods systematically miss that signal — applies equally to pharma research, FMCG concept testing, culture measurement, and anywhere else that self-report data is used as a proxy for genuine experience.

The question "have they understood?" is structurally identical to "do they genuinely want this?" and "are they actually satisfied?" and "do they really believe in the direction?" All of them are questions that verbal and written responses cannot reliably answer — and that emotional AI can.

About EchoDepth

EchoDepth Insight is the emotional AI research platform built by Cavefish. 44 facial Action Units. Real-time VAD scoring. Delivered entirely remotely via browser — no hardware, no facility, no specialist operator.

Common questions about emotional AI in training

The session

Feel to know: ITEC 2026 session page

Full session details, the core argument, and the technology behind the talk.

Related

What is emotional AI?

The science behind FACS, VAD scoring, and measuring genuine emotional response.

Insight

Why conventional methods miss the signal

The structural problems with self-report research — and why physiological measurement changes the picture.

EchoDepth Insight

Interested in emotional AI for training, research, or people programmes?

Book a 30-minute discovery call. We will show you what EchoDepth surfaces on your specific challenge.